I've looked at the log files now.

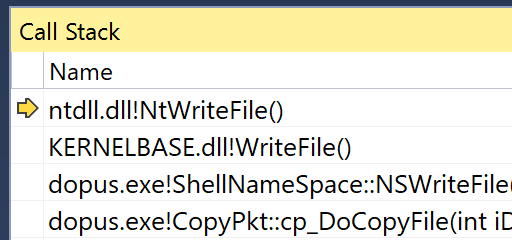

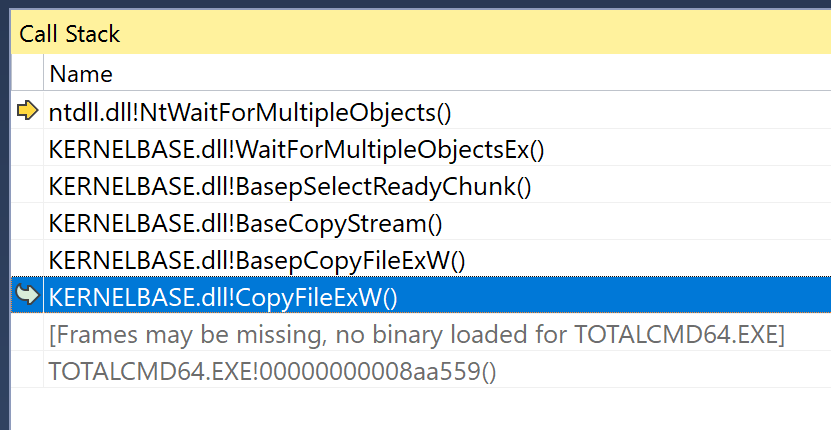

To answer the question of "How does Total Commander do it?", as you have it configured, TC was just calling the shell file copy API:

We've gone over that more than once in this thread and others.

The logs show both TC and RoboCopy are using the shell file copy API, in fact. So, again, we aren't comparing how your setup responds to lots of different programs vs Opus; we're comparing how your setup responds to the shell CopyFileEx API vs Opus. There are only two methods of copying files in play here.

That said, having logs of both TC and RoboCopy calling the same API was still useful as they show there is still quite a large difference between the two runs, despite them using the same very-high-level API to copy the file. (More on this below.)

That shows the speed varies a lot, even when doing things in an identical way. The margins of error here are large, into 10+ seconds.

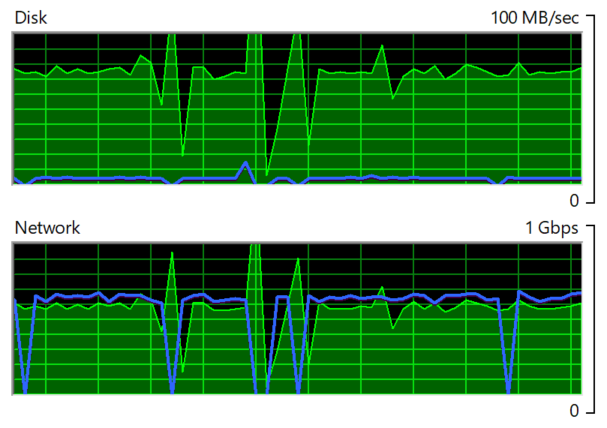

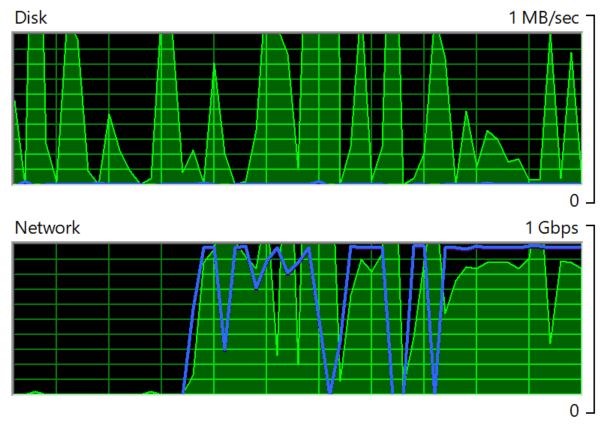

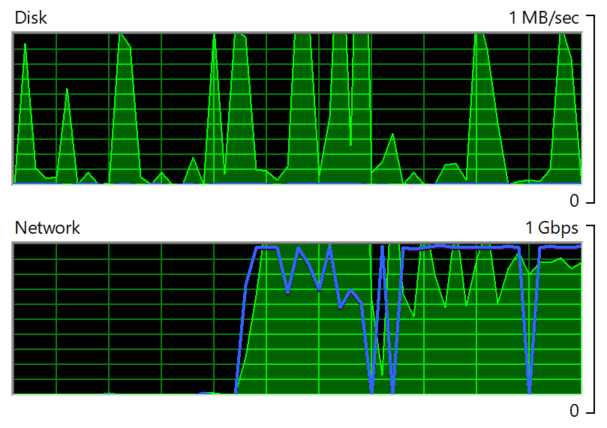

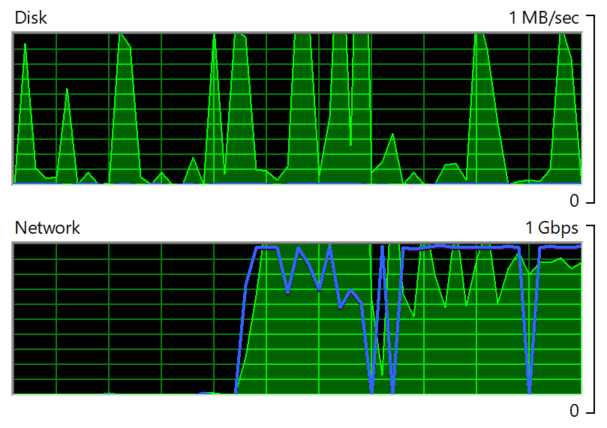

Your tests were done in this order: Opus, RoboCopy, TC. That put Opus at a potential disadvantage because, after it had copied the file, the file data may have been cached in RAM, causing the read side to be much faster in subsequent tests. That is backed up by your disk usage graphs during the copy:

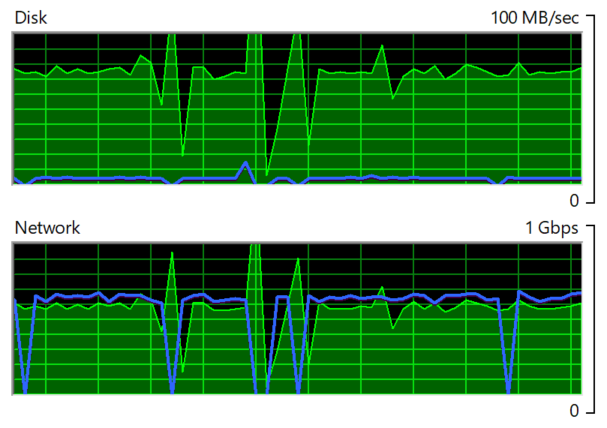

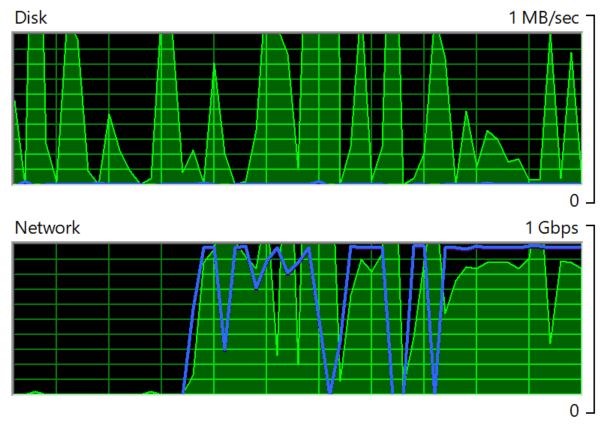

The disk usage graphs include all processes so we don't know how much is due to each program, but they give us a maximum usage.

Local disk activity while Opus was copying was about 80MB/s:

Local disk activity while RoboCopy was copying was virtually nothing:

(Note the scale change between screenshots: 100 MB/s vs 1MB /s.)

Local disk activity while TC was copying was also virtually nothing:

As I've said many times, in many threads about copy speed, the order of the test can matter a lot, and tests should be repeated multiple times to account for potential caching. (Or you'd need to do a full power-down and reboot between tests, to wipe out the software and hardware caches.)

I doubt this accounts for all of the difference in this case, but it may account for some of it. (It's also possible it's fairly irrelevant as time spent waiting on the network massively dwarfs the time spend waiting on the local disk. But any proper test should avoid it as a potential unfairness.)

Based purely on the ProcMon logs (an apples-to-apples comparison), and ignoring what each program reports as the copy speed/time (which is apples-to-oranges), we can compare the three tests like this:

File size was 7,948,206,080 bytes = 7580 MB

| Program |

Buffer |

Mode |

Start |

End |

Duration |

Speed |

TWT |

| Opus |

512 KB |

Custom, Sync I/O |

18:59:04 |

19:00:47 |

103 sec |

73.6 MB/s |

~0.06 sec |

| RoboCopy |

1024 KB |

CopyFileEx, Async I/O |

19:08:26 |

19:09:47 |

81 sec |

93.6 MB/s |

~0.07 sec |

| TC |

1024 KB |

CopyFileEx, Async I/O |

19:13:05 |

19:14:19 |

74 sec |

102.4 MB/s |

~0.07 sec |

Observations:

-

Using a 1 MB = 1024 KB buffer size in Opus may be worth a try, so things are even there.

-

There's a 6 second difference between the RoboCopy and TC tests, despite both using the same high-level API to copy the data. That's over a quarter of the difference between Opus and RoboCopy, and shows we have quite a large margin of error. On top of the caching issue mentioned above, it backs up what I've been saying about needing to do multiple tests. I doubt that this and/or the caching issue account for the whole difference; I just suspect things may be closer than they look.

-

Each subsequent test is faster than the previous one, which may partially be due to caching (as discussed already). Some of that may also be down to random factors (which is why multiple tests are important).

-

The TWT column is interesting, and may be the key to what's happening. This is what I've called "typical write time", and is (very roughly, and from a very quick look) about how long each WriteFile call takes.

With the test setup, Opus pushed 512 KB of data each time it called WriteFile, and that took about 0.06 seconds each time. But the other programs, using CopyFileEx, were pushing twice as much data for only about 0.01 seconds of extra time. That is probably very significant.

It suggests to me that some part of the system is not buffering data properly, or that the actual transfer times are being dwarfed by some other overhead (e.g. some part of the network stack, or antivirus). The fewer times WriteFile is called, the faster things get, which should not generally be the case with buffered writes. (The data each program hands to WriteFile should go into an appropriately sized buffer (or a 'sliding window' type system, or a cycle of multiple buffers, etc.) allocated by the operating system, and that buffer is what is actually sent over the network. The programs writing into the system-allocated buffer should be abstracted from it, but obviously aren't for some reason (hence the sensitivity to buffer sizes).

Something that can happen with fast networks (depending on how they are configured, and the hardware involved) is that if they aren't given enough data to fill a full packet, they may delay for a moment to see if more data arrives. If more data arrives, they can pack it all together. If it doesn't, they may stop waiting and send an incompletely packet. How that is tuned can affect throughput and latency (which are usually in conflict with each other, and networks with different aims tune for one or the other, or a compromise between the two).

So maybe (some of) what we're seeing is one application-level buffer size working better with how this network is tuned than the other. The application-level buffer shouldn't matter if the operating system, network stack, etc. are all doing their jobs properly, but we aren't in an ideal world.

Another potential difference is that CopyFileEx is using asynchronous I/O while Opus is using synchronous I/O. Most software uses synchronous I/O, like Opus, as writing for async I/O is extremely complex and error-prone, and should not -- in theory -- make a difference when doing a simple, sequential write, as the operating system is supposed to take care of things with its own buffering, and certainly seems to in my own local tests; maybe it doesn't in all cases. (Unfortunately, when Microsoft worked on copy speed problems of their own back in Windows Vista, they changed the high-level CopyFileEx rather than addressing the low-level WriteFile/sync-buffering side of things. I have no idea why, other than they probably just wanted a quick fix to the more visible issue without doing the hard work of improving OS-wide performance that people were less likely to notice. Great for things that call CopyFileEx, but useless for everyone else.)

It's highly likely that these issues will affect just about everything that writes data to the network drive using anything other than CopyFileEx, as most software just opens a standard, buffered, synchronous write handle and writes data to it. CopyFileEx is actually the odd one out here, and the unusual case; it just happens that things which call CopyFileEx and Opus file copies are the things you tend to look at and that report speeds and times. (When was the last time you benchmarked how quickly Photoshop or whatever could write data to the network? I'm guessing never. But it and most other software are probably affected by this issue as well.)

We could look into making Opus use async I/O but that is a complex change that would need a lot of testing, so it isn't going to happen overnight. It's also not guaranteed to account for whatever difference is left after the testing issues discussed above are corrected, but it might. Our biggest problem is that we have yet to reproduce any meaningful speed differences on our own setups between Opus and other software, which makes it difficult to test different theories.